Exponential family

22 Januari 2013

Here we give a brief description of the exponential family with some examples, including the Bernoulli and Binomial distribution. We also touch upon the idea of curved exponential family distributions. These concepts are then used to define the Ising and Potts model. Most of this material is described in Wainright and Jordan (2008) and Koller and Friedman (2009). These books also describe that the exponential family can be considered as the result of maximizing the entropy $H(p)$ for probability distribution $p$. (See also Park and Newman for this point.)

Definition: Exponential family

Let $X=(X_1,X_2,\ldots,X_m)$ be a random vector with values in $\mathcal{X}^m=\otimes_{s=1}^{m}\mathcal{X}_s$. Then $X$ belongs to the exponential family if the density for configuration $x$ can be written as

\begin{align*}

p_\theta(x) = \exp(\langle\theta,\phi(x)\rangle - A(\theta))

\end{align*}

where $\langle\theta,\phi(x)\rangle=\sum_i\theta_i\phi_i(x)$ is the inner product, $\phi=(\phi_\alpha, \alpha\in \mathcal{I})$ be a collection of functions that maps a configuration $x\in \mathcal{X}^m$ to $\mathbb{R}$, and $A(\theta)$ is the normalizing constant (log partition function, cumulant)

\begin{align*}

A(\theta) = \log \int_{\mathcal{X}^m} \exp\langle\theta,\phi(x)\rangle d\nu(x).

\end{align*}

Here $\mathcal{I}$ is an index set with $|\mathcal{I}|=d$. The functions $\phi$ are sufficient statistics or potential functions. Corresponding to $(\phi_\alpha, \alpha\in\mathcal{I})$ we define the parameters $\theta=(\theta_\alpha, \alpha\in\mathcal{I})$.

Example 1: Bernoulli variables

Let $X\in \mathcal{X}=\{0,1\}$ be a Bernoulli random variable and the probability mass function is $p_\theta(x=1)=\theta$, which is the probability of success. Define the sufficient statistic $\phi(x)=\mathbb{I}(x)=1$ if $x=1$ and $0$ otherwise, and the parameter $\theta=\log(\theta/(1-\theta))$. With $\exp\langle \theta,\phi(x)\rangle=\theta/(1-\theta)$, and $1/A(\theta)=\sum_{x\in \mathcal{X}}\exp\langle\theta,\phi(x)\rangle=1/(1-\theta)$ we see that

\begin{align*}

p_\theta(x=1) = \exp(\langle\theta,\phi(x)\rangle - A(\theta))=\theta,

\end{align*}

which shows that the Bernoulli distribution is of the exponential family type with dimension $|\mathcal{I}=d=1$. $ \bigstar$

Example 2: Binomial variable

Let $X_s$ in $\mathcal{X}=\{0,1\}$ be a Bernoulli random variable. Then the vector $X=(X_1,X_2,\ldots,X_m)$ takes values in $\mathcal{X}^m=\{0,1\}^m$. Let $\phi_1(x)=\sum_s x_s$ and $\phi_2(x)=m-\phi_1(x)$ be the sufficient statistics. The probability mass function, with $\theta$ the probability of success $P(X_s=1)=\theta$ for each $s$, is

\begin{align*}

p_\theta(x) = \binom{m}{\phi_1(x)}\theta^{\phi_1(x)}(1-\theta)^{m-\phi_1(x)}

\end{align*}

To see that this belongs to the exponential family, let

\begin{align*}

\langle\theta,\phi(x)\rangle &=\phi_1(x)\log\theta+(m-\phi_1(x))\log(1-\theta)\\

& =\phi_1(x)\log\frac{\theta}{1-\theta} + m\log(1-\theta)

\end{align*}

so that $\phi(x)=(\phi_1(x),\phi_2(x))=(\sum_sx_s,m-\sum_s x_s)$ and $\theta=(\log\theta,\log(1-\theta))$. And

\begin{align*}

A(\theta)=-\log\binom{m}{\phi_1(x)}.

\end{align*}

Here $|\mathcal{I}|=d=2$ since we require $\phi_1(x)=\sum_sx_s$ and $\phi_2(x)=m-\sum_sx_s$. $\bigstar$

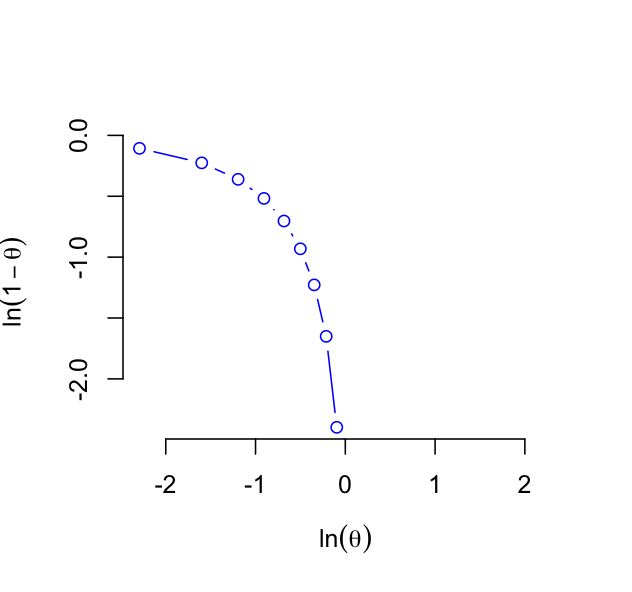

Suppose that in Example 1 with the Bernoulli random variable $X$ the parameterization $\theta=(\log\theta,\log(1-\theta))$ was chosen with sufficient statistic $\phi(x)=(\mathbb{I}(x=1),\mathbb{I}(x=0))$ of dimension $2$. Then again it can be seen that the Bernoulli distribution is of exponential family type, but it is overcomplete. It is clear that $(\log\theta,\log(1-\theta))$ defines a curve, see Figure 1. This curve is not convex and so the distribution is not well defined for all values of $\theta$. (See Koller and Friedman, 2009, p. 265.)

Figure 1: Curved exponential family, Binomial $(\log\theta,\log(1-\theta))$.

Because this representation belongs to the curved exponential family, it is not minimal, and so different values of $\theta$ can lead to the same probability distribution (Wainright and Jordan, 2008, p. 40).

Definition: Sufficient statistic

A sufficient statistic is a function of the data $\phi(x)$ that figures in the likelihood function $p_\theta(x)$ such that inference through the likelihood ratio depends only through $\phi(x)$. That means if different data $y$ were obtained such that $\phi(x) = \phi(y)$ then the likelihood ratios will be the same, that is,

\begin{align*}

\frac{p_{\theta_1}(x)}{p_{\theta_2}(x)}=\frac{p_{\theta_1}(y)}{p_{\theta_2}(y)}

\end{align*}

for all $\theta_1$ and $\theta_2$. (See Young and Smith, 2005, p. 91.)

Example 3: Binomial variable

We consider again the binomial random variable from example 2. The likelihood ratio for the probabilities of success $\theta_1$ and $\theta_2$ is

\begin{align*}

\frac{p_{\theta_1}(\phi(x))}{p_{\theta_2}(\phi(x))} = \left(\frac{\theta_1}{\theta_2}\right)^{\phi(x)}\left(\frac{1-\theta_1}{1-\theta_2}\right)^{m-\phi(x)}

\end{align*}

Then for configurations $x$ and $y$ with $\phi(x)=\phi(y)$ we have that the likelihood ratios are equal,

\begin{align*}

\left(\frac{\theta_1}{\theta_2}\right)^{\phi(x)-\phi(y)}\left(\frac{1-\theta_1}{1-\theta_2}\right)^{\phi(y)-\phi(x)}=1,

\end{align*}

showing that $\phi(x)$ is a sufficient statistic. $\bigstar$

Example 4: Multinomial variable

Suppose that $X_s\in \mathcal{X}=\{0,1,2,\ldots,r-1\}$, and so the vector $X\in \mathcal{X}^m$. The probability of the configuration $x\in\mathcal{X}^m$ has the multinomial distribution with probability mass function

\begin{align*}

p_\theta(x) = \frac{m!}{x_1!x_2!\cdots x_r!}\theta_1^{x_1}\theta_2^{x_2}\cdots \theta_r^{x_r}

\end{align*}

Take $\theta=(\log\theta_1,\log\theta_2,\ldots,\log\theta_r)$, with dimension $r$. Define the indicator functions $\mathbb{I}_{s;j}(x_s)=1$ if $x_s=j$ for $s\in \{1,2,\ldots,m\}$ and $j\in\{0,1,2,\ldots,r-1\}$. , and $\phi(x_s)=(\mathbb{I}_{s;0}(x_s),\mathbb{I}_{s;1}(x_s),\ldots,\mathbb{I}_{s;r-1}(x_s))$ are the sufficient statistics consisting. Then

\begin{align*}

p_\theta(x)=\exp\left(\sum_{s\in V}\langle\theta,\phi_s(x_s)\rangle - A(\theta)\right)

\end{align*}

where $A(\theta)=\log\sum_{x\in\mathcal{X}^m}\exp(\sum_s\langle\theta,\phi(x_s)\rangle)$. This representation is overcomplete since $x_j=m-\sum_{s\backslash j} x_s$, and so $\phi(x):\mathcal{X}\to \mathbb{R}^{r-1}$, and the dimension is $r-1$. A minimal representation uses the parameter $\theta=(\log\theta_1/\theta_0,\log\theta_2/\theta_0,\ldots,\log\theta_{r-1}/\theta_0)$ and the sufficient statistics $\phi(x_s)=(\mathbb{I}_{s;1}(x_s),\mathbb{I}_{s;2}(x_s),\ldots,\mathbb{I}_{s;r-1}(x_s))$. $\bigstar$

References

- Barrat, A., Barth�lemy, M., and Vespignani, A. (2009). Dynamical processes on complex networks. Cambridge University Press.

- Besag, J (1974). Spatial interactions and the statistical analysis of lattice systems. Journal of the Royal Statistical Society, Series B, 36(2): 192-236.

- B\"{u}hlmann, P and van de Geer, S (2011). High dimensional

analysis. Springer.

- Durrett, R. (2007). Random graph dynamics. Cambridge

University Press.

- Grimmett, G. (2010). Probability on graphs. Cambridge University Press.

- Koller, D and Friedman, N (2009). Probabilistic Graphical

Models. MIT Press

- Kolaczyk, E.D. (2009). Statistical analysis of network data: Methods and models. Springer.

- Park, J. and Newman, M.E.J. (2004). The statistical mechanics of networks. Physical Review E, 70: 058102.

- Wainright, M. and Jordan, M. (2008). Graphical models, exponential families, and variational inference. Foundations and Trends in Machine Learning, 1(1-2): 1-305.

- Young, GA and Smith, RL (2005). Essentials of statistical

inference. Cambridge University Press.